When replicating to Microsoft Azure using Zerto Virtual Replication 5.0 (or even Azure Site Recovery) the protected vDisks cannot be larger than 1TB due to the maximum page disk size being limited to 1TB by Microsoft. It’s rumored they are planning on increasing this to 8TB later this year, but in the meantime how do you work around this problem?

The good news is that there is a solution! On the protected VM you need to configure multiple sub-1TB disks. Don’t use 1TB exactly as the disk size, as this equates to 1024GB in a vSphere environment which is above the Azure page disk limit. Instead use a value of say 990GB to stay under the limit, but with a decent amount of space. Here you can see I’ve created 2 x 990GB VMDKs for my VM:

I’m now going to initialize the disks in my FileServer2 VM:

Now we need to create a new storage pool containing the 990GB disks in ‘File and Storage Services’:

On the new storage pool I now create a new ‘Simple’ virtual disk:

The virtual disk can be thin or fixed, the choice is up to you:

I configuring the virtual disk size to match the available space in the storage pool at 1.93TB so it spans both disks in the pool:

I can now create a volume to present the virtual disk as a drive letter (don’t use D:\ as that is used for a scratch disk in Azure VMs):

Here you can see the 1.93TB volume in Windows Explorer:

I now have a 1.93TB volume spanning 2 x 990GB virtual disks and my VM is replicating to Azure using ZVR 5.0 (I already configured the protection):

The VM is configured to use a Standard DS2_V2 VM on recovery which has 2 cores, 7GB and 4 vDisks which is enough to run my FileServer2 VM in Azure:

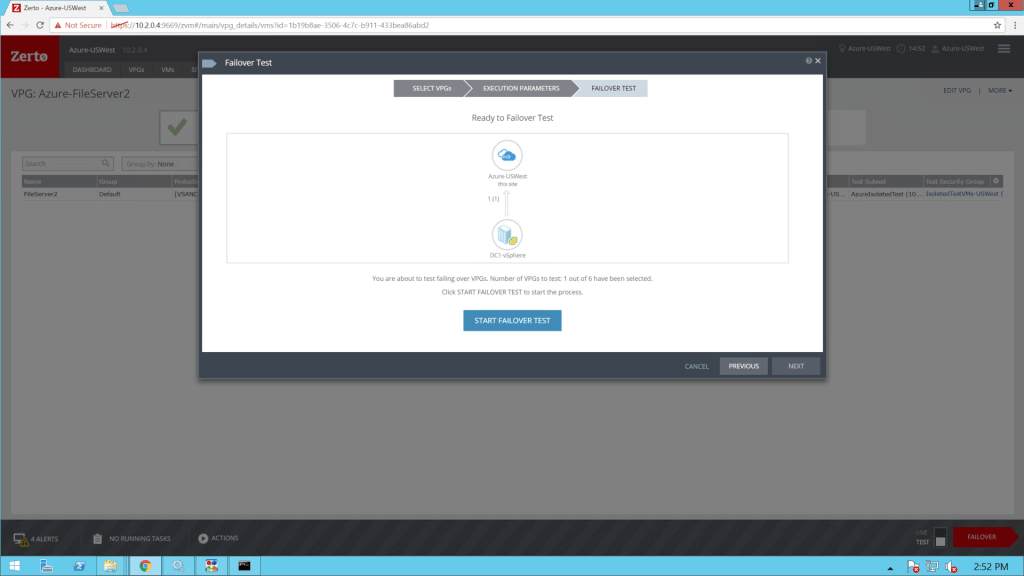

I’m now going to perform a failover test to bring the FileServer2 VM online in my US West Azure subscription:

After 3 minutes the FileServer2 VM is running in Azure:

I now assign the VM a public IP so I can RDP into it from my desktop (as I have no networking in place between my desktop and the Azure VM network):

I now RDP to the FileServer2 VM in Azure and I can access my single 1.93TB volume in Azure:

With the ability to create data etc:

And that’s it! You can also use this same workflow to overcome the disk performance limitations of standard storage accounts. Standard HDD disks in Azure are limited to 500 IOPs and roughly 60MB/sec throughput. By pooling multiple disks you can not only overcome the space limit, you can increase the available performance. I.E spanning a virtual disk and volume across 10 virtual disks can give you nearly 10TB of volume space with a theoretical max 5,000 IOPs and 600 MB/sec throughput. Of course, this is dependent on many factors, but it’s certainly an upgrade on performance without the cost of premium storage.

Please share if you found this useful! Thanks,

Joshua

Leave a comment