Since first posting about my hyperconverged home lab back in Feb 2017 it’s been a massive hit with the VMware community. With integrated NAS, switch, and 4 motherboards on 1 PSU it was fun to build. I actually did this way back in 2015 and since then, I’ve used it extensively for both my job and learning. However, it was starting to lack horsepower when I wanted to run nested hypervisors, and I still had 5 separate Intel NUCs each with cumbersome power bricks.

I use my lab daily to perform my job as a sales engineer. I need to demo solutions and test my PowerShell scripts at a reasonable scale with multiple hypervisors. Any investment in my lab enables me to do the best job that I can. Also, it’s fun. 😊

So, in November I decided to fix this by rehoming the hyperconverged home lab and I renamed it v1.0. The net result was 9 ESXi hosts in 1 case! In case you missed it, check it out here.

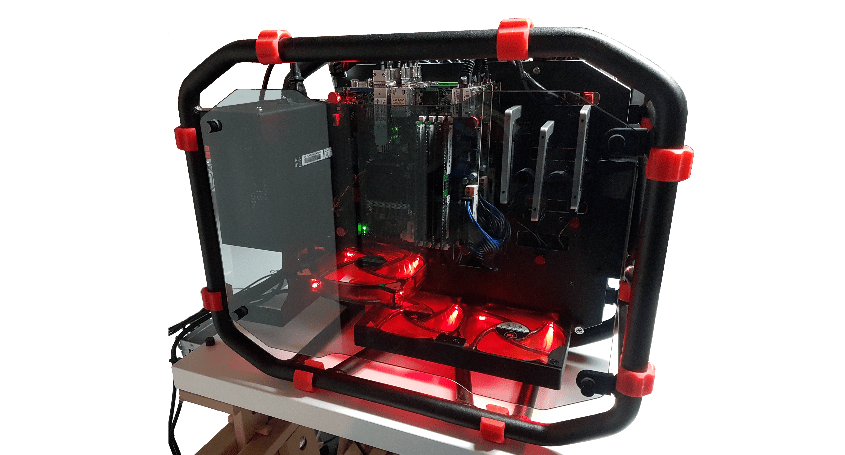

This left my Inwin D-Frame Mini case empty. My pride and joy sat there with nothing to do:

I’d be lying if I said this wasn’t on purpose. I wanted to empty it so I had an excuse to build something new, something harder, better, faster, stronger, capable of running demos at a minimum of SMB scale. The answer? The hyperconverged home lab v2.0!

Having already proven I could multiple server motherboards on the same PSU I knew that I wanted 3 hosts in my new home lab and I wanted to use HCI software to be truly converged. I contemplated 4 hosts, but the 4th would’ve been difficult to cool and even though there would be enough power I didn’t want to push it too far. There was also the cost of 4 of everything vs 3! After 20 or so configurations, shopping lists, and much deliberation, I settled on this:

Here is the build:

- 3 x Supermicro X10SDV-8C-TLN4F+ Xeon D-1541, 45w each, total 135w

- 3 x 128GB Crucial CT4K32G4RFD424A (4x32GB) DDR4 ECC

- 3 x Samsung 960PRO 512GB NVMe M.2

- 3 x Crucial 2TB SSD MX300 2.5”

- 1 x Inwin D-Frame Mini (now a collector item!)

- 1 x Seasonic Platinum 400w PSU Fanless SS-400FL2

- 2 x ATX 20-Pin/24-Pin Cable Y Splitters (from moddiy.com)

- 2 x 120mm Cougar CF-D12HB-R 16.6 dB(A)

- 1 x Ubiquiti EdgeSwitch 16XG, 12 x SFP+, 4 x 10GBaseT, 160 Gbps non-blocking

This results in a total capacity of:

- 24 cores, 48 threads, 40.8GHz

- 384GB RAM

- 1.5TB NVMe cache

- 6TB flash capacity

- 10GbE end to end connectivity

If money was infinite I would’ve gone for the Supermicro X10SDV-12C-TLN4F+ or even the X10SDV-16C-TLN4F+ for the additional compute, but at roughly $300 and $1100 more per board, I decided against it. I reasoned my cluster would be sufficiently balanced with 8 cores per board, 16 threads. My mistake was thinking that 16 threads counted towards the compute available in the hypervisor, it doesn’t. If I could go back in time I would stump up the extra $900 for an additional 12 cores, 20.4GHz capacity, but I can’t so hey ho.

With the components ready to start building, this is where the real fun started. First, I routed the 2 Y-splitter motherboard power cables, 3 SATA cables, and a single HDD power cable around the back of the case:

Power cabling done, the next step was to install the 3 x 2TB SSDs:

Time for the first motherboard to go in with power, case fan, power switch and SSD connected:

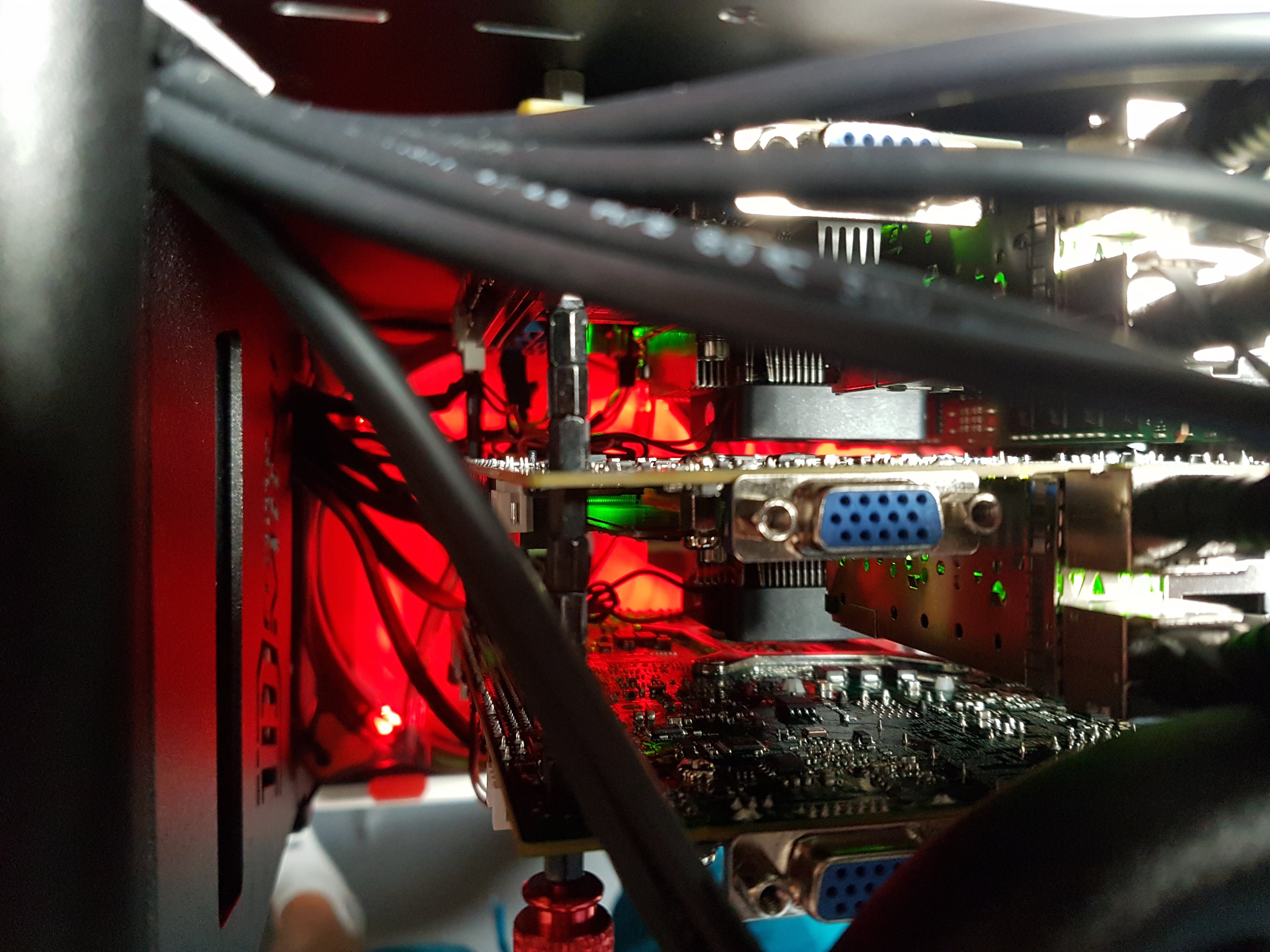

Using multiple black m3 motherboard standoffs (6 x 4 to be exact) I then mounted the second motherboard straight on top with an air gap between to ensure sufficient cooling:

That’s 2 down, 1 to go. Time for a quick refill of my favorite Yorkshire ale (this blog is called virtually sober for a reason!):

With my thirst for ale quenched I then installed the 3rd motherboard. Thinking that I might need to remove this motherboard frequently I used red thumbscrews (with 1 more m3 standoff) for the finishing touch:

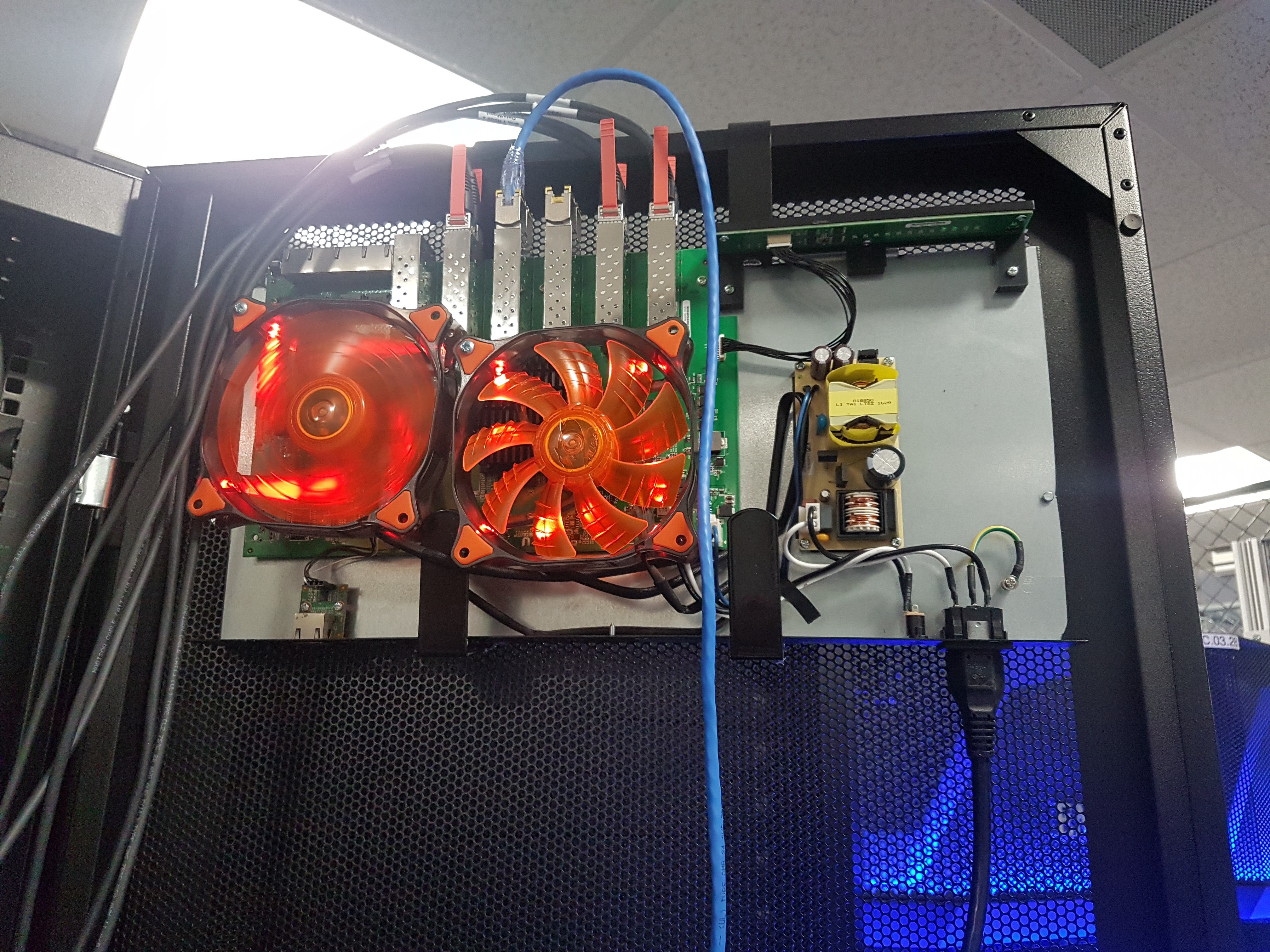

The final step was to connect the 10GbE networking. I’ve previously written about my 10GbE switch which you can check out here. This is a cool action shot from a customer’s site:

As you can see I took off the case, removed the 2 screaming fans that it came with, and replaced them with 2 x 120mm COUGAR CF-D12HB-R fans (it auto stopped one of them in the picture as it was cool enough in the DC). Using 2 glued on garden wreath hangers you can see it hangs nicely on the front door of a rack, and on the back of my D-frame mini case:

For connectivity I used:

- 6 x 10Gtek SFP+, 10G 0.5-Meter SFP DAC Twinax, Passive, 2 for each host

- 3 x 1GbE, for the IPMI ports to power on the motherboards w/o a power switch

On the same switch I’m also using:

- 2 x 10GbE Twinax to the HCHL v1.0 Asrock hosts (middle SFP+ ports)

- 4 x 10GbE Twinax to a Rubrik r344 appliance (left SFP+ ports)

- 1 x 1GbE to the HCHL 1.0 switch (far left 10GBaseT port)

You might have spotted the open power transformer at this point and wondering if I’ve ever fallen foul of it. Yes, it hurt! But my reasoning is that I already have an open-air case so it doesn’t make much difference having an open-air switch. There’s already a lot not to touch in there when its powered on anyway!

With the build complete it was time to start installing a hypervisor. I wanted to go all in on HCI and I liked the idea of not only integrated storage, but no need for separate VMs for mgmt such as a vCenter, vROPS etc, and having a REST API end to end platform which I could automate. So, I started with Nutanix AHV and I was prepared to divorce VMware vCenter/ESXi from my new lab. But, due to idling host efficiency and HA config issues I had to switch back to ESXi and trusty vSphere with vSAN (6.5 Update 1). For me, vSAN is the best thing to come out of VMware in years. Why? Because it disrupts a legacy market, it works, it’s efficient, and most importantly, it’s integrated! I just check a box and go. That’s what VMware does best and has too frequently lost its way with appliances for everything. Everything should be integrated into ESXi and/or the vCenter IMHO. If VMware did this they would significantly increase their revenue streams from additional products/licenses.

If you’d like to hear more detail on why I switched from AHV to ESXi/vSAN, the build in general, and see a demo of my vSphere Change Control DB script then check out this cool vBrownbag episode here (thanks for hosting me vBrownBag team!).

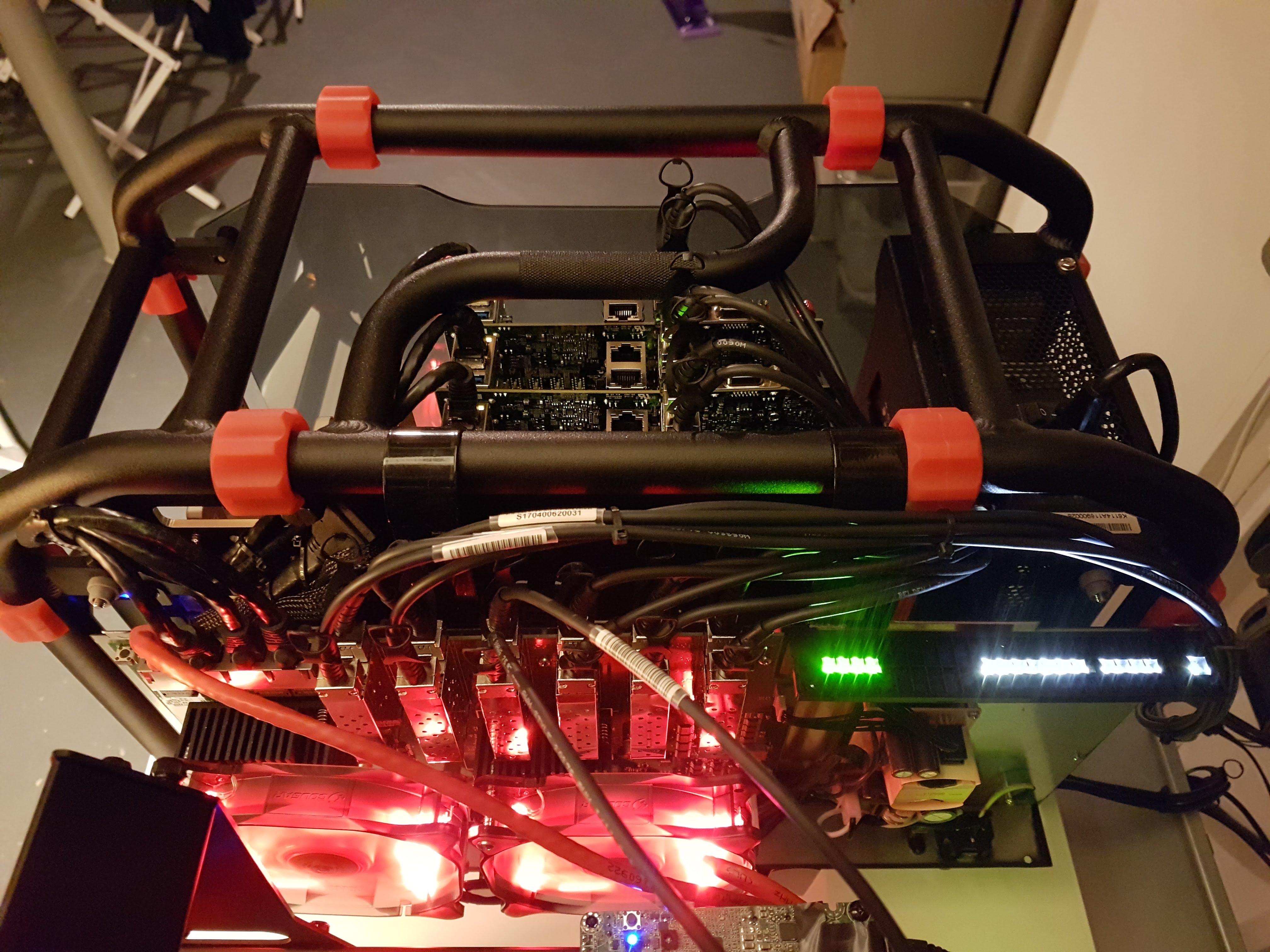

The next challenge I faced was heat. All was good in my lab running 15 or so VMs per host. But as soon as I started to push 30-40 VMs per host, the passive CPU heatsinks and 2 x 120mm fans weren’t cutting the mustard. Pushing 95+ degrees Celsius (203 Fahrenheit) under heavy Load caused the top motherboard host to crash. Neither was this good for longevity or value on my investment because I need to run as many VMs as possible. This was my solution:

One fix alone wasn’t enough, it needed two. I first installed 4CM slimline fans on each motherboard heatsink and an additional 140mm Cougar fan (cunningly mounted with 3 zip ties!) to push more air up in-between the motherboards:

The net result was much more palatable and scalable 61 degrees Celsius (142 Fahrenheit) under heavy load:

With my issues fixed I could then start scaling my lab with some real VMs. As of 02/01/18, I’m now running:

- 50 x Ubuntu demo VMs

- 36 x Windows 2016 demo VMs (will expand to 40)

- 2 x Exchange DAG VMs

- 4 x SQL Availability Group VMs

- 12 x Standalone SQL Server VMs

- 1 x Oracle VM (will expand to 2)

- 1 x Nested Hyper-V VM (I need to deploy 1-2 VMs within the nested hypervisor)

- 1 x Nested AHV VM (same as above)

- 5 x File server VMs (mix of Windows & Linux)

- + vCenter, VPNServer, Domain Controller VMs

This comes in a total 115 VMs:

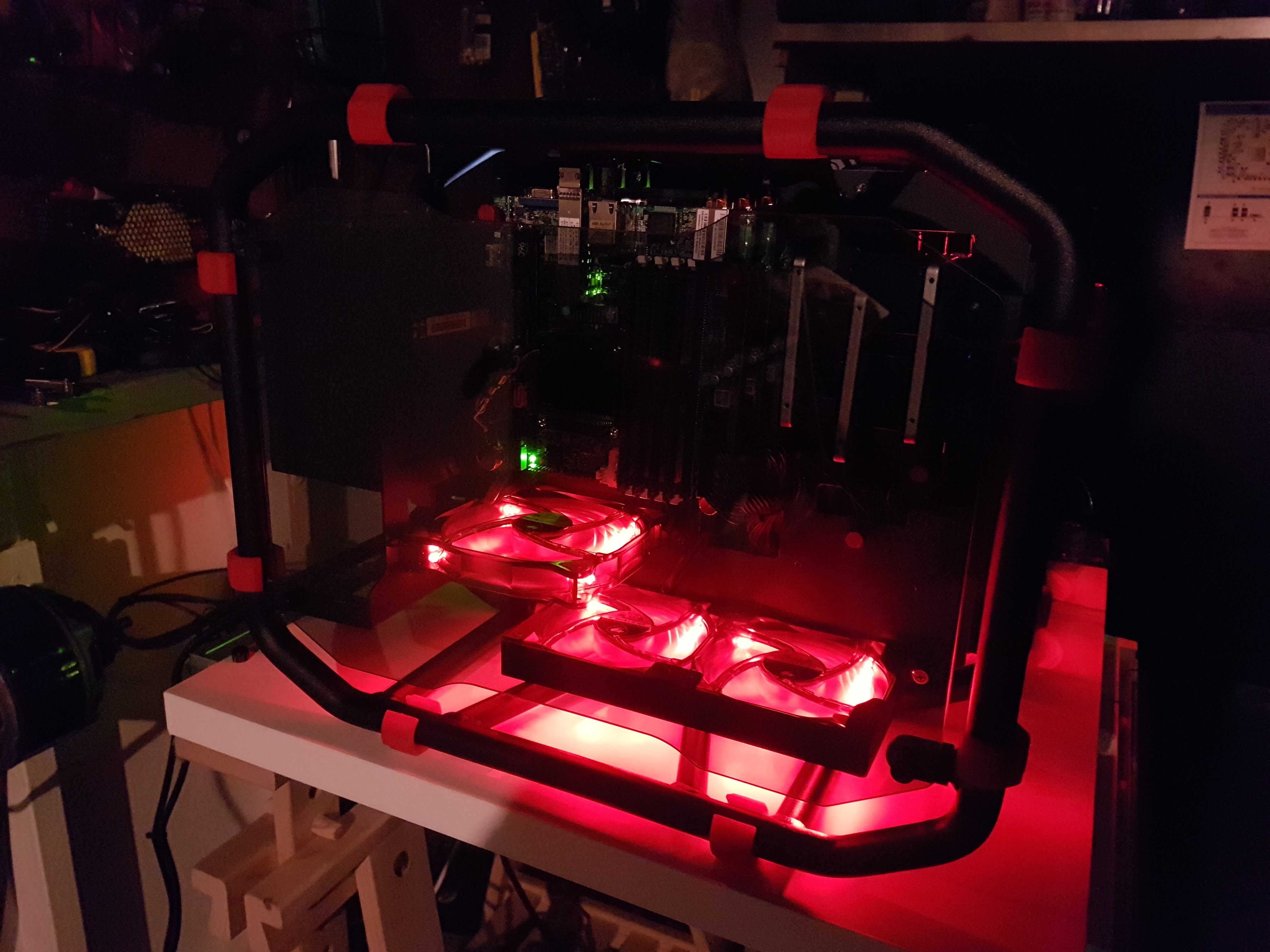

So that’s it! Here are a couple more pictures of the hyperconverged home lab v2.0 in action:

If you’ve come this far in the post you are probably wondering how much this cost. Roughly, it came in at:

- Motherboards $2,563

- RAM $3,825

- Storage $2,666

- Switch $731

- Fans, cables, etc $300

- Case $300 (bought back in Jan 2015)

- PSU $110 (also bought Jan 2015)

- Total $10,495

While this might seem crazy at first, it works out at $291.52 dollars per month for 36 months. The electricity bill won’t be huge as its only 1 x 400w PSU and 1 x 10GbE switch, but let’s say this adds around $50 per month with maintenance for replacement parts etc. This brings us to $350 a month for 3 years, total $12,600. If we compare this to the minimum VMware cloud on AWS 3-year subscription, in US Oregon with 4 hosts, its $12,151.78 per month. Mine is $11,801.78 cheaper per month (with trial licenses which I’m happy to refresh in a lab). Over 3 years this comes in at $437,464 vs $12,600! Looks like a bargain to me. Or at least, that’s how I justified it to my wife!

I know the host spec is 4.5x the CPU and 4x RAM in VMware on AWS, but you probably wouldn’t run that many more VMs on 4 hosts in production than I’m running without HA reservations anyway. The 86 demo VMs in my lab only have 1GB of RAM each, these would typically be 4GB in production and probably using 4x the CPU so it evens out.

If you liked this post and love the lab as much as I do then follow me on twitter to see what else I’m up to. Thanks for reading,

Leave a comment