I was recently asked by a US Hospital network to help with importing externally sourced data from a CSV file into a Microsoft SQL database using PowerShell. They had tried a few different methods on their own, but their problem was that it wasn’t just a simple INSERT of the data required. Rather, they needed to also UPDATE existing records based on a matching ID if the CSV data already existed in the database.

A comma-separated value file (CSV) is one of the most common methods of transporting data between computing systems. By storing tabular data in plain text with a comma as a field separator, it is a universal file format used by many. To this end; being able to extract data from a CSV is a very useful skill to have in your toolkit.

So, how do you get data from a CSV into your Microsoft SQL Database and cope with both UPDATE and INSERT in a scalable way? This is exactly what this blog post in my series on using PowerShell and Microsoft SQL is going to help you do!

Start by downloading my example scripts from the link below:

Extract the zip file to C:\ImportingCSVsIntoSQLv1\. Within the folder you’ll see 3 files:

- ExampleData.csv

– A CSV file containing 50,000 rows of sample data for you to test the process - ImportingCSVsIntoSQLv1-Create.ps1

– Edit the $SQLInstance variable to a valid SQL instance and run the script to create a sample DB - ImportingCSVsIntoSQLv1-Import.ps1

– Edit the $SQLInstance variable to match the above, then run the script to see how to import a CSV

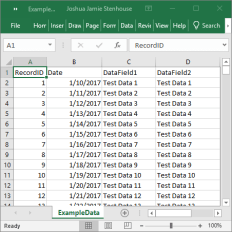

Both scripts were tested using PowerShell 5.1 on Windows 10 with SQL Express 2017. I recommend starting with the example data provided, then once you’ve mastered this take the Import.ps1 example and customize it to your exact use case. This way you’ve established a working baseline which you can always go back to if you’re not sure on what did or didn’t work. Here is what you should see after a successful first run on the example script:

My first version of this script queried the existing DB table, then performed a ForEach CSV row action in PowerShell which then did an UPDATE or an INSERT depending on whether the row already existed based on a matching ID. This worked great for INSERT, but the UPDATE proved unscalable. I tried with 50,000 rows and gave up after an hour of it running.

To solve this, I switched the script to create a “temporary table” in TempDB, insert the CSV data, then SQL MERGE between the source and target tables to combine the data. The script then deletes the temporary table to be a good citizen. The reason I put temporary table in quotation marks is because you can’t use SQL temporary tables, as they are immediately dropped on the next Invoke-Sqlcmd in the script. The workaround is to just create a regular table in TempDB and drop it to achieve the same effect. I found this post very useful in writing my example MERGE:

https://www.essentialsql.com/introduction-merge-statement/

This means that to merge 50,000 rows you now only need to perform 1 query rather than 50 thousand! With the sample data given my processing time for an UPDATE comes it at 49 seconds. Nice!

If you found this useful please tell me via the Drift chat what it helped you achieve. Happy scripting,

Joshua

Leave a comment